Fast, Frictionless, and Secure: Explore our 120+ Connectors Portfolio | Join Webinar!

IBM and Confluent Announce Strategic Partnership

Get started with Confluent, for free

Watch demo: Kafka streaming in 10 minutes

I’m excited to announce a new strategic partnership with IBM. As part of this partnership, IBM will be reselling Confluent Platform, enabling its customers to leverage their existing IBM relationships for faster, more seamless access to Confluent Platform through a simplified procurement process. Customers will also have a single point of contact through IBM customer support while knowing they can rely upon IBM’s direct line into Confluent support.

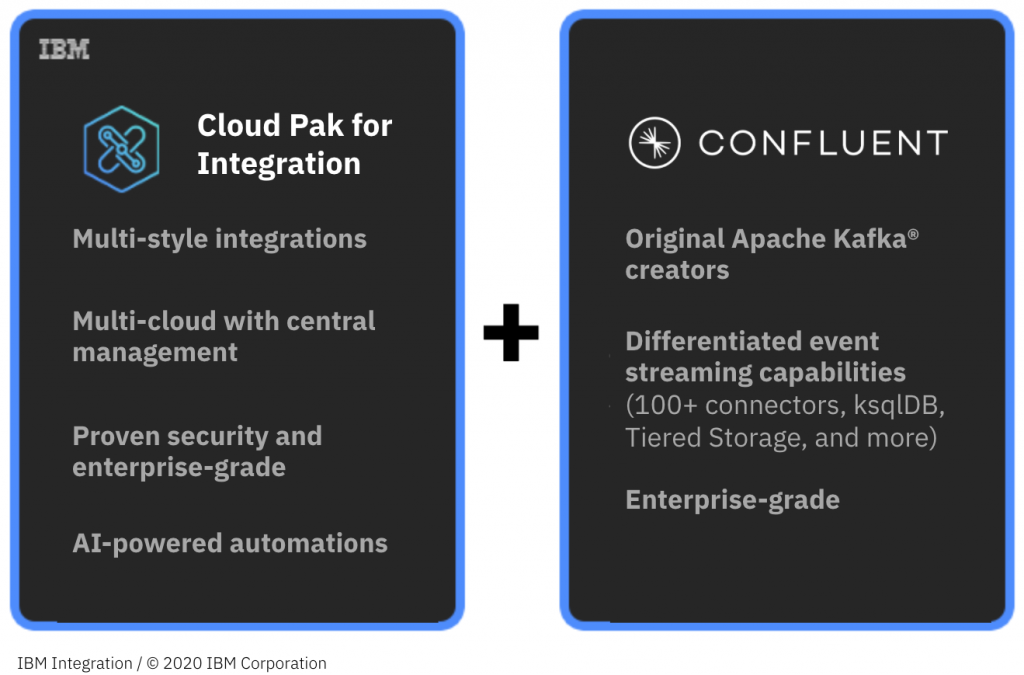

This opportunity empowers even more businesses to accelerate their journey of putting event streaming at the heart of their company. It unlocks the power of an extensive number of interconnected data sources and microservices requiring infrastructure that can operate efficiently at a massive scale, and it gives enterprises the market’s broadest set of integration capabilities with the industry’s leading event streaming platform.

IBM Cloud Pak for Integration with Confluent delivers event streaming applications that leverage Apache Kafka, providing a single platform to help businesses unlock value from data in real time.

IBM Cloud Pak for Integration with Confluent delivers event streaming applications that leverage Apache Kafka, providing a single platform to help businesses unlock value from data in real time.

I also think this is a reflection of what we see happening more broadly—event streams have emerged as a foundational part of the modern software stack. Event streaming not only delivers improved outcomes and unlocks new potential, but it also allows developers to build and enable new real-time applications that drive business innovation. By pioneering this approach, Confluent and Apache Kafka® have been at the core of this innovation, enabling web-scale distributed computing, app modernization across private and public clouds, insights, analytics utilizing big data, and real-time applications using continuous stream processing.

While the early adopters of Kafka were individual start-up developers, event streaming is now mainstream. Enterprises around the world are rethinking how to enable this new paradigm so they can deliver real-time applications faster—even if their architecture wasn’t necessarily built with event streaming in mind. As Confluent Platform and Kafka become the central nervous system of their business, and by working with IBM as one of the most trusted integration partners in the world, organizations are now able to quickly build a bridge between all the powerful data sitting across the enterprise. These streams of data are at the heart of some of the most cutting-edge reinventions of established companies. Through this new strategic partnership with IBM, we’re excited to be at the heart of many more.

To learn more about this new partnership between IBM and Confluent, join the webinar on January 12, 2021, at 10:00 a.m. ET, and check out the IBM announcement by Savio Rodrigues, VP of IBM Application Platform & Integration Offering Management.

Get started with Confluent, for free

Watch demo: Kafka streaming in 10 minutes

Avez-vous aimé cet article de blog ? Partagez-le !

Abonnez-vous au blog Confluent

Kafka Summit Bangalore 2024: Bringing Data Streaming to You

For the very first time, Kafka Summit is coming to Bangalore on May 2, 2024, at the Sheraton Grand Bengaluru Whitefield Hotel & Convention Center. And we’re bringing everything that makes Kafka Summit, well, Kafka Summit.

Confluent Named Google Cloud Partner of the Year Amid Accelerating Partnership Momentum

This week in Las Vegas, Google is hosting their annual Google Cloud Next ʼ24 conference, bringing together leaders from across the IT industry to learn about and explore the newest innovations in and around the Google Cloud ecosystem.